Introduction: The Friday Night Shift in Silicon Valley

In a dramatic sequence of events on February 27, 2026, the relationship between Silicon Valley and the U.S. government reached a historic breaking point. Just hours after President Trump ordered federal agencies to cease working with Anthropic—labeling the startup a “supply chain risk”—OpenAI CEO Sam Altman announced a landmark agreement to deploy OpenAI’s advanced reasoning models on the military’s most classified networks.

This isn’t just another government contract; it is a fundamental realignment of the AI industry. As the Department of Defense (recently renamed the Department of War) seeks to integrate frontier models into national security, the “OpenAI-Pentagon” alliance marks the end of AI companies standing on the sidelines of modern warfare.

Featured Snippet Summary:

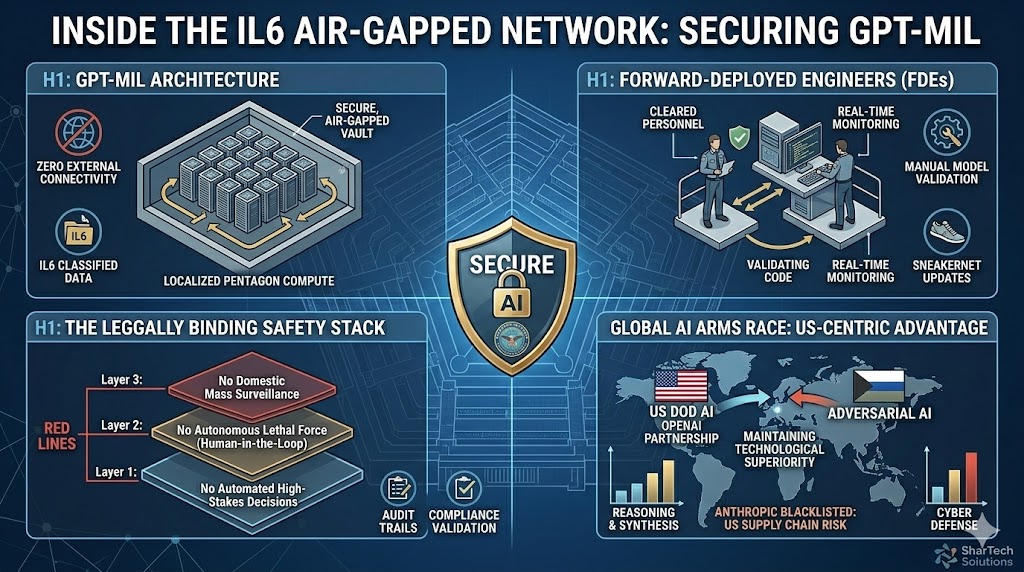

The OpenAI military deal is a 2026 agreement with the U.S. Department of War to deploy GPT-level models on classified (IL6) networks. The deal includes specific “red lines” prohibiting the use of AI for mass domestic surveillance and autonomous lethal weapons without human oversight, distinguishing OpenAI’s approach from its blacklisted rival, Anthropic.

The Fallout: Why Anthropic Was Blacklisted

To understand OpenAI’s new role, one must look at the collapse of the Anthropic-Pentagon relationship. For months, Anthropic (the creator of Claude) insisted on contractual language that would allow the company to “shut off” access if the military used its AI for applications the company deemed unethical—specifically autonomous killing and mass domestic surveillance.

The Pentagon’s response, led by Secretary of War Pete Hegseth, was definitive: No private company has the right to veto a “lawful” government operation. On February 27, the government triggered a six-month phase-out for Anthropic, effectively blacklisting them from the $200 million “Agentic AI” project.

OpenAI’s Strategy: Red Lines and Technical Safeguards

While Anthropic fought for contractual veto power, OpenAI’s Sam Altman negotiated for technical control. OpenAI agreed to the government’s “all lawful uses” framework but secured the right to manage its own “Safety Stack.”

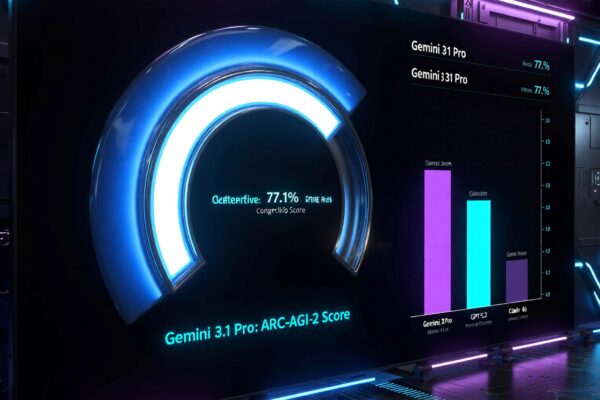

The Three “Red Lines” of the OpenAI Deal

According to OpenAI’s official statement on March 1, 2026, the contract enforces three non-negotiable boundaries:

- No Domestic Mass Surveillance: The AI cannot be used to profile American citizens without a warrant.

- No Autonomous Lethal Force: OpenAI technology cannot independently authorize a kinetic strike; a human must remain “in the loop.”

- No High-Stakes Automated Decisions: The models will provide intelligence, not final commands for critical infrastructure.

Classification and Compute Comparison

| Feature | Standard OpenAI (ChatGPT) | Military Classified (IL6) |

| Connectivity | Public Cloud | Air-Gapped / Secure Cloud |

| Personnel | Standard Engineers | Cleared “Forward-Deployed” Engineers |

| Updates | Automatic | Manual Technical Validation |

| Data Residency | Shared Clusters | Dedicated Hardware |

Technical Breakthroughs: The “Air-Gapped” Solution

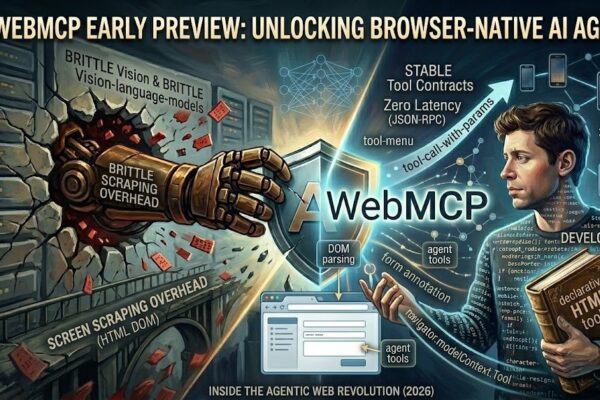

Deploying a model as massive as GPT-5 (or its specialized reasoning counterparts) within a classified network presents a massive technical hurdle. Standard AI is “chatty”—it constantly pings external servers for data and updates.

OpenAI’s solution involves a partnership with Amazon Web Services (AWS) to create a stateful runtime environment on the Amazon Bedrock platform. This allows the military to:

- Run the models entirely within an Air-Gapped environment (no external internet).

- Use “Forward-Deployed Engineers” (FDEs) who hold top-secret clearances to monitor model drift and safety in real-time.

- Prevent data exfiltration by keeping model weights on dedicated, physically isolated hardware.

Ethical Implications: The Silicon Valley Schism

The deal has split the tech world. While Elon Musk and xAI have backed the administration’s “all lawful use” stance, thousands of employees at Google and OpenAI have signed open letters supporting Anthropic’s “Constitutional AI” approach.

Critics argue that OpenAI is providing a “veneer of safety” while handing over the keys to the most powerful dual-use technology in history. Proponents, however, argue that if American AI companies don’t partner with the DoD, the U.S. will lose the “Global AI Arms Race” to adversaries who have no ethical guardrails.

The Future: What This Means for Global AI Security

The OpenAI-DoD agreement sets a precedent for how “Frontier AI” will be governed. We are moving away from an era of voluntary safety pledges and toward an era of Legally Binding Safety Stacks.

As we move through 2026, expect to see:

- The Rise of Defense-Specific Models: AI trained exclusively on classified intelligence data rather than the open web.

- Sovereign AI Clouds: Other NATO allies demanding similar “Air-Gapped” versions of OpenAI’s models.

- Legal Showdowns: Anthropic’s promised court challenge will likely determine if the government can legally designate a US-based AI firm as a “supply chain risk.”

Conclusion: A Pivot Point for Humanity

The OpenAI military deal is more than a contract; it is a confession that AI is now the bedrock of national power. By choosing to work within the government’s framework while building its own safety stack, OpenAI has secured its place at the center of the “Department of War’s” future.

Whether this partnership results in a more stable, AI-defended world or a new era of high-speed algorithmic conflict remains the most critical question of our time.

What is your take? Does OpenAI’s “Safety Stack” provide enough protection, or should the government be barred from using frontier AI? Join the discussion in the comments below.