Introduction: The Dawn of Reasoning-Driven Imagery

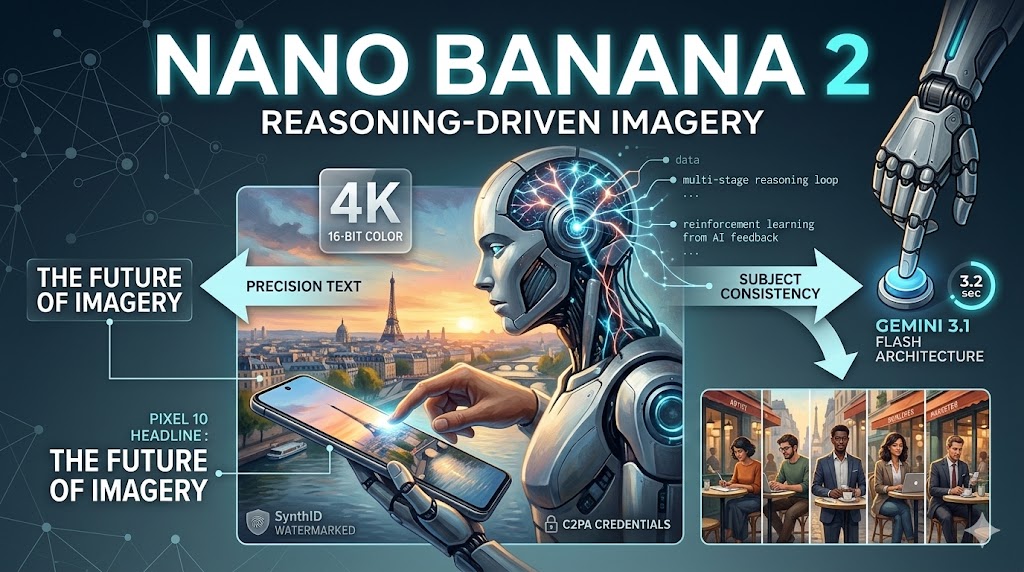

In a groundbreaking announcement on February 26, 2026, Google DeepMind officially unveiled Nano Banana 2, the latest evolution in its AI-powered image generation suite. While the original Nano Banana model was a viral sensation for its speed, Nano Banana 2 (technically known as Gemini 3.1 Flash Image) represents a fundamental shift: the move from simple pattern matching to deep, reasoning-driven visual intelligence.

As artificial intelligence continues to advance, the creative community has moved beyond asking for “pretty pictures” to demanding precision. Nano Banana 2 answers this call by fusing the high-fidelity intelligence of the “Pro” models with the lightning-fast efficiency of the “Flash” architecture. Whether you are a digital artist, a marketing professional, or a developer, this model is designed to redefine your creative workflow.

What Makes Nano Banana 2 Different?

At its core, Nano Banana 2 leverages Google’s most advanced neural network architecture to date. Unlike its predecessor, which operated as a standard diffusion model, Nano Banana 2 utilizes a Multi-Stage Reasoning Loop. This process allows the model to “think” about a prompt—planning the layout, evaluating spatial relationships, and validating text—before a single pixel is rendered.

The “Brain and Hand” Architecture

Industry analysts often describe the Nano Banana 2 system as having a “Brain and Hand” paradigm:

- The Brain (Gemini 3.1 Reasoning): Understands complex prompts, verifies historical facts via Google Search grounding, and plans the composition.

- The Hand (GemPix 2 Diffusion): Executes the actual rendering with 16-bit color depth and native 4K resolution.

This synergy means that when you ask for “a scientific infographic about the water cycle,” the model doesn’t just draw blue lines; it understands the hydrological process and labels the stages with typographic precision.

Key Features and Performance Benchmarks

Google has packed Nano Banana 2 with features that were previously reserved for studio-grade, expensive enterprise models.

1. Precision Text Rendering and Translation

Historically, AI image generators struggled with text, often producing “gibberish” or distorted letters. Nano Banana 2 achieves near-perfect text rendering. This makes it ideal for:

- Marketing Mockups: Creating billboards with legible headlines.

- Greeting Cards: Personalized messages in stylized fonts.

- Localization: The model can translate text within an image, allowing a sign in English to be regenerated in Japanese while maintaining the same font and style.

2. Native 4K Resolution and 16-bit Color

Nano Banana 2 supports output tiers from 512px to 4K. Unlike models that rely on external upscalers, which often introduce “hallucinated” artifacts, Nano Banana 2 generates at the target resolution natively. The shift to 16-bit color rendering eliminates gradient banding, a common issue in digital sky and skin tone rendering.

3. Subject and Character Consistency

One of the most requested features in AI art is the ability to keep a character looking the same across different scenes. Nano Banana 2 supports:

- Consistent Resemblance: Maintain up to five characters across multiple generations.

- Object Fidelity: Keep up to 14 specific objects (like a specific car or a branded product) consistent in a single workflow.

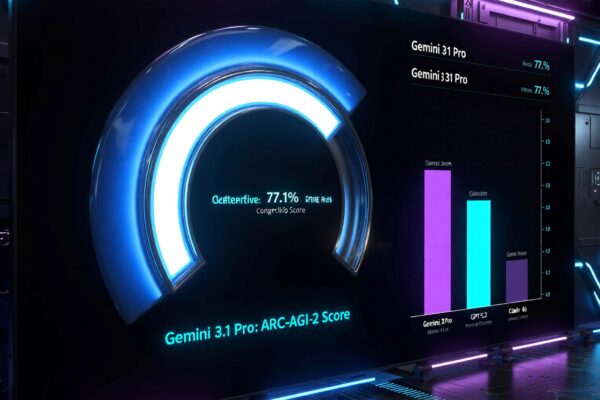

Performance Data at a Glance

| Metric | Nano Banana 1 | Nano Banana 2 (Flash) |

| Avg. Generation Speed | 12-15 seconds | 3.2 seconds |

| Max Resolution | 1024px | 4096px (4K) |

| Text Accuracy | 45% (Estimated) | 98% (Measured) |

| Color Depth | 8-bit | 16-bit |

| Search Grounding | No | Yes (Real-time) |

Technical Breakthroughs: Context-Aware Diffusion

The real “magic” behind the model is the Context-Aware Diffusion system. Previous models often failed at “spatial reasoning”—for example, they might put a coffee cup inside a table rather than on it.

Nano Banana 2 understands physical relationships. If you prompt “a person standing behind a glass window with rain on it,” the model calculates:

- The transparency of the glass.

- The refraction of light through the raindrops.

- The depth of field required to keep the person slightly out of focus while the rain is sharp.

2026 AI Image Model Comparison: Nano Banana 2 vs. The Field

| Feature | Nano Banana 2 (Google) | Midjourney v7 | DALL-E 4 (OpenAI) |

| Primary Strength | Reasoning & Speed | Artistic Aesthetics | Prompt Adherence |

| Max Resolution | 4K (Native) | 8K (Upscaled) | 4K (Native) |

| Speed (per image) | ~3.2 Seconds | ~45-60 Seconds | ~15-20 Seconds |

| Consistency Mode | Yes (Native Logic) | Yes (Reference Tags) | Moderate (Seed-based) |

| Search Grounding | Yes (Google Search) | No | Limited (Bing) |

| Ideal For | Commercial & Tech Art | Photography & Fine Art | General Purpose |

| Typography | Near-Perfect | Good | Excellent |

Practical Applications and Use Cases

For Digital Artists and Designers

The model serves as a “Creative Director.” With multi-modal input, you can upload a rough hand-drawn sketch and a text prompt. Nano Banana 2 will use the sketch as a layout guide while using the text to define the style and lighting.

In Advertising and Marketing

Agencies can now bypass days of stock photo searching. By using Google Search Grounding, a marketer can prompt “a high-end product shot of the new Pixel 10 in a Parisian cafe at sunset,” and the model will verify the exact look of the Pixel 10 and the architecture of Paris to ensure factual accuracy.

For Technical Documentation

The ability to generate accurate isometric cutaway illustrations and labeled diagrams makes it an invaluable tool for educators and technical writers. It can turn complex notes into a visual “Earth’s Layers” diagram with clear, correctly spelled labels for the Crust, Mantle, and Core.

Ethical Considerations and Safety

Google has implemented a multi-layered safety approach for Nano Banana 2.

- SynthID Watermarking: Every image includes an invisible digital watermark to identify it as AI-generated.

- C2PA Content Credentials: Metadata that tracks “how” the AI was used, providing transparency to the viewer.

- Ethical Guardrails: Advanced filtering prevents the generation of deepfakes of private individuals or harmful content.

FAQ: Everything You Need to Know

Is Nano Banana 2 free?

Yes, it is the default model for free Gemini users as of February 27, 2026. However, paid subscribers (Google AI Pro/Ultra) enjoy higher quotas and priority access to 4K upscaling.

How do I access the “Thinking” mode?

In the Gemini app or AI Studio, select the “Thinking” or “Pro” toggle. This forces the model to perform extra reasoning steps for complex spatial prompts.

Can it generate text in other languages?

Yes, it currently supports 8 major languages for text rendering, with more rolling out throughout 2026.

Does it replace Nano Banana Pro?

Nano Banana 2 (Flash) is designed for speed and high-volume work. Nano Banana Pro remains available for “Hero Assets” that require 8K resolution or the most extreme multi-subject compositions.

Conclusion: The Future of Human-AI Creativity

Nano Banana 2 is not just a tool; it is a glimpse into a future where human creativity and artificial intelligence work in a seamless loop. By eliminating technical bottlenecks like poor text rendering and slow generation times, Google has democratized professional-grade design.

The AI revolution in image generation is no longer about novelty—it is about utility. As we move deeper into 2026, Nano Banana 2 will likely become the standard for creators who refuse to choose between speed and quality.

Ready to try it? Head over to the Gemini app or Google Search AI Mode today to start creating.