Have you ever looked at a page of mathematical formulas for a neural network and felt like you were reading ancient hieroglyphics? You’re not alone. For years, the “math barrier” kept brilliant creative minds out of the AI space.

But in 2026, that barrier has finally crumbled. Visual Machine Learning (VML) has moved from a niche educational aid to a primary development method. By transforming abstract “black box” code into interactive, tangible experiences, we are entering the era of Explainable AI (XAI)—where you don’t just build a model; you see how it thinks.

What Is Visual Machine Learning?

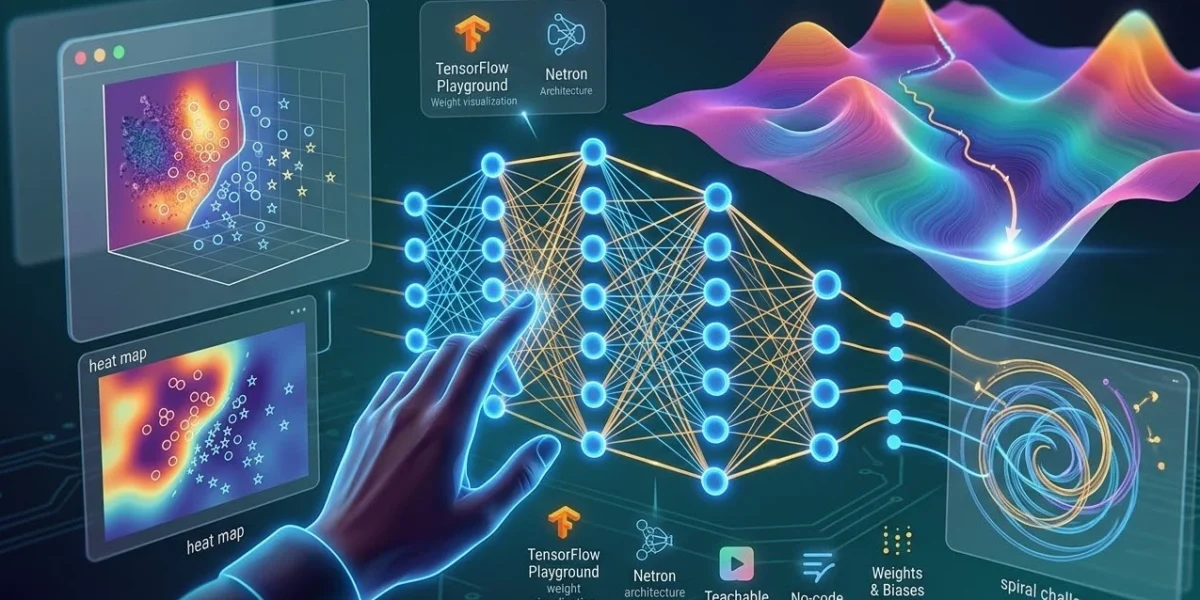

Visual machine learning is the practice of using interactive graphics, real-time animations, and spatial UI to design and debug AI models. Instead of writing 100 lines of Python to see a decision boundary, you use a visual interface to manipulate data points and watch the model adapt instantly.

At its core, VML bridges the gap between high-level theory and practical execution. This is especially vital in 2026, as AI systems become more complex and the need for human-in-the-loop oversight grows.

Why Your Brain Prefers Visual AI

Research indicates that 65% of people are visual learners. In the context of AI, where a single parameter change can have a butterfly effect across millions of neurons, static text simply isn’t enough.

Visual learning in machine learning helps you master:

- Data Topology: Understanding how your data is clustered in 3D space.

- Decision Boundaries: Seeing exactly where a classifier draws the line between “Cat” and “Dog.”

- Gradient Descent: Watching an algorithm “walk” down a loss landscape to find the most efficient solution.

The 2026 VML Toolkit: Top Platforms for Beginners

If you are starting today, you don’t need a PhD. You need the right sandbox. Here is how the top tools compare in the current landscape:

| Tool | Best For | Key 2026 Feature |

| TensorFlow Playground | Concept Clarity | Real-time weight/bias thickness visualization |

| Netron | Model Architecture | Support for visionOS & AR model headers |

| Teachable Machine | Rapid Prototyping | No-code computer vision via webcam |

| Weights & Biases | Professional Tracking | Visualizing “Context Rot” in LLMs |

Pro-Tip: The “Spiral” Challenge

When you first open TensorFlow Playground, try the “Spiral” dataset. It’s the ultimate test for a beginner. You’ll quickly learn why “Linear” activation functions fail and why you need “ReLU” or “Tanh” to solve complex patterns.

Key Components of Modern Visual ML

To truly “speak” AI in 2026, you must understand these three pillars of the visual approach:

1. Interactive Heatmaps

These show you where a model is “looking.” For example, in a medical AI, a visual heatmap might highlight the specific pixels in an X-ray that led the model to flag a potential anomaly.

2. Loss Landscapes

Imagine a mountain range where the lowest valley is the perfect model. Visualizing this “Loss Landscape” allows you to see if your model is stuck in a “local minimum” (a shallow ditch) instead of the “global minimum” (the deepest valley).

3. Spatial Node Editors

In 2026, we are moving away from flat screens. With visionOS integration, developers can now “walk through” a neural network in 3D, pulling on nodes and adjusting layers with hand gestures.

The Future: From Screens to Spatial Reality

The next frontier of visual machine learning is Immersive XAI. We are already seeing the first wave of AR apps that allow engineers to visualize data streams in physical space. Imagine standing in your warehouse and seeing a “digital twin” of your logistics AI, color-coded to show which routes are being optimized in real-time.

Conclusion: Your Journey Starts with a Click

Visual Machine Learning isn’t just a “easier” way to learn; it’s a better way to build. By making the invisible visible, we reduce bias, catch errors faster, and make AI more human.

Ready to start your visual machine learning journey? Head over to the TensorFlow Playground and try to solve the spiral dataset. It’s the best way to “feel” the math before you ever have to write it.