Writing software has fundamentally changed for me since I started incorporating Large Language Models (LLMs) into my development process. What once took hours of research, debugging, and documentation review now flows much more naturally with AI assistance. Here is my complete, step-by-step workflow for writing software with LLMs.

Why I Use LLMs for Software Development

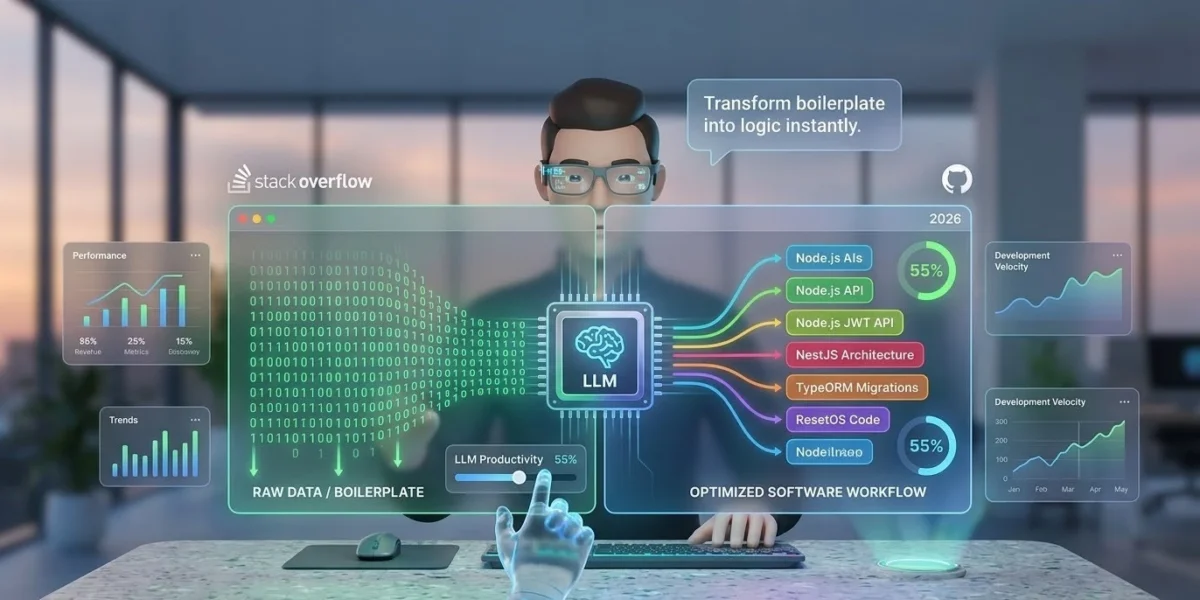

The software development landscape has evolved dramatically. According to GitHub’s developer research, 92% of U.S.-based developers already use AI coding tools at work. The productivity gains are real—developers report completing tasks up to 55% faster when using AI assistance.

My journey with LLMs began when I realized how much time I was spending on repetitive tasks: boilerplate code, syntax lookup, and debugging common errors. LLMs excel at these areas, freeing me to focus on high-level architecture and creative problem-solving.

My LLM-Enhanced Development Workflow

1. Planning and Architecture

Before writing a single line of code, I use LLMs to help structure my approach. I’ll describe the problem and ask for potential solutions, comparing different architectural patterns. This isn’t about letting the AI make decisions—it’s about getting alternative perspectives that I might not have considered.

For example, when building a new feature, I’ll ask: “What are the pros and cons of implementing this as a microservice versus a monolith in a NestJS environment?” The AI’s response often highlights scalability or latency considerations I hadn’t fully weighed.

2. Code Generation and Boilerplate

This is where LLMs truly shine. I use them to generate:

- Initial project scaffolding and folder structures

- API endpoint templates (Express, NestJS, Fastify)

- Database models and TypeORM migrations

- Unit test templates for Jest or Vitest

Pro-Tip: The quality of AI output depends on the specificity of your prompt. Instead of “create a REST API,” specify: “Create a Node.js Express REST API with JWT authentication, including user registration and login routes using PostgreSQL.”

3. Documentation and Comments

Writing documentation is often the task developers dread most. I’ve found LLMs to be excellent at generating clear, concise documentation. After writing a complex function, I’ll ask the AI for a JSDoc or Docstring block that explains the logic, parameters, and potential edge cases.

This approach ensures my code remains maintainable for my future self and my team, without the manual overhead of writing every explanation from scratch.

4. Debugging and Troubleshooting

When I encounter errors, I copy the error message and the relevant code snippet into the LLM. The AI often identifies the issue immediately, suggesting fixes that I might have missed after hours of staring at the same screen.

For instance, during a recent project, I was struggling with a memory leak in a Python application. The AI quickly identified that I was creating database connections inside a loop without proper cleanup—a subtle mistake I’d overlooked despite extensive debugging.

Best Practices for Using LLMs in Development

Understand What You’re Getting

LLMs can generate “hallucinated” or insecure code. I always manually verify the output, especially for security-critical components. Never blindly trust AI for authentication, encryption, or financial logic.

Provide Rich Context

To get the best results, always include:

- The exact programming language and framework version.

- Existing constraints (e.g., “Must run on Node 18”).

- Expected input/output formats.

Tools I Use

- GitHub Copilot: For real-time, inline code suggestions as I type.

- ChatGPT / Claude: For high-level architectural discussions and complex debugging.

- Specialized Coding Assistants: Such as Cursor or language-specific plugins for data science.

The Human Element Remains Essential

Despite the power of LLMs, human judgment is irreplaceable. According to the 2023 Stack Overflow survey, developers who combine their expertise with AI tools report the highest job satisfaction. Use AI to handle the “how,” but you must remain the master of the “why.”

Conclusion: Your Journey Starts with a Click

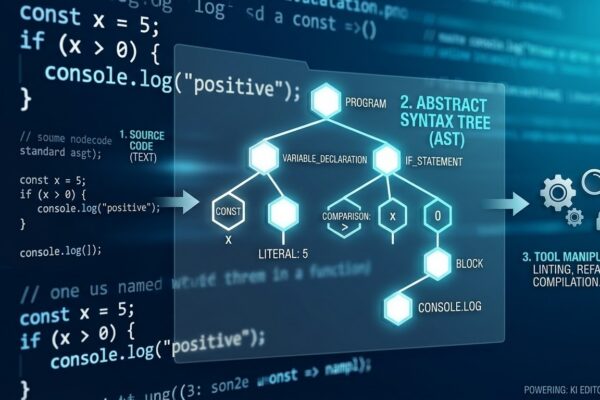

Visualizing and building software with LLMs isn’t just an “easier” way to work; it’s a better way to innovate. By making the invisible layers of code tangible, we catch errors faster and build more robust systems.

What’s your experience with LLMs in software development? Have you found specific use cases where they excel or fall short? Share your thoughts in the comments below.