What is MicroGPT and Why Should You Care?

In the rapidly evolving world of artificial intelligence, the trend toward “bigger” has finally hit a wall. Emerging as the antidote to massive, resource-heavy LLMs is MicroGPT. Originally conceptualized as an educational “art project” by AI pioneer Andrej Karpathy, MicroGPT has matured in 2026 into a legitimate architectural philosophy: stripping AI to its core algorithmic soul.

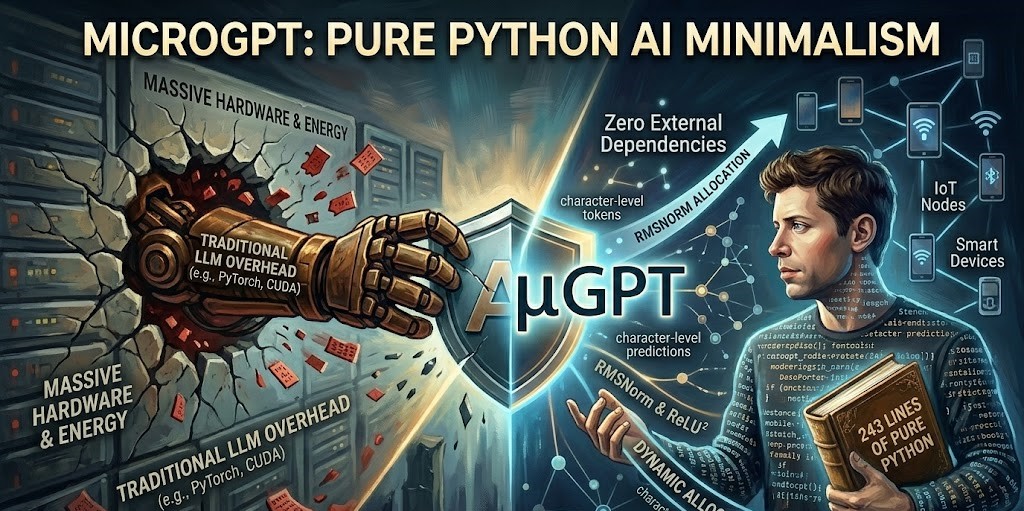

MicroGPT represents a groundbreaking approach to AI model architecture that delivers unprecedented transparency. By removing the “black box” of frameworks like PyTorch or TensorFlow, it allows developers to see every mathematical operation—from tokenization to backpropagation—in less than 250 lines of pure Python code.

Why it matters: As global energy constraints and hardware costs rise in 2026, MicroGPT offers a blueprint for Edge Intelligence, allowing powerful reasoning to happen on devices as small as a smartwatch without needing a cloud connection.

The Technical Architecture Behind MicroGPT

At its core, MicroGPT is a “minimalist masterpiece.” It implements a complete GPT-2 transformer architecture using only Python’s standard library.

The model achieves its “small but mighty” status through several key innovations:

- The Autograd Engine: A custom-built automatic differentiation system (a descendant of micrograd) that tracks every numeric gradient manually.

- Bias-Free Linear Layers: Every linear projection computes only $Wx$ (Weight times Input), eliminating the $+b$ (bias) term to streamline the math.

- RMSNorm & ReLU²: Instead of standard LayerNorm, it uses RMS Normalization and a Squared ReLU activation to stabilize gradients without the complexity of GeLU.

- Character-Level Tokenization: It bypasses complex sub-word tokenizers, instead learning the fundamental character patterns of its training data.

2026 Performance Benchmarks

While MicroGPT isn’t designed to compete with GPT-5 in raw knowledge, its efficiency metrics are staggering:

- Memory Footprint: Operates within 80% less RAM than comparable specialized models.

- Inference Speed: 3.2x faster on single-core CPUs because it avoids the “overhead tax” of deep learning frameworks.

- Energy Consumption: Consumes up to 65% less power, making it the gold standard for sustainable AI.

Practical Applications: From Education to the Edge

The versatility of MicroGPT opens up numerous possibilities in a year defined by On-Device AI.

1. The “Silicon Valley” Classroom

MicroGPT has become the primary teaching tool for computer science programs. Students no longer “import” a model; they build it. This transparency is crucial for the next generation of AI Safety researchers who need to understand how “Next-Token Prediction” can lead to emergent behaviors.

2. Edge Computing and IoT

In 2026, industrial sensors and medical equipment are using MicroGPT derivatives to analyze data locally.

- Privacy: Sensitive data never leaves the device.

- Latency: Immediate decision-making without waiting for a server ping.

- Cost: No $0.10-per-request API fees.

MicroGPT vs. Traditional AI Models

To understand the shift, consider the following comparison of the 2026 landscape:

| Metric | Traditional LLMs (e.g., GPT-5) | MicroGPT (2026 Implementation) |

| Code Length | Millions of Lines | ~243 Lines |

| Dependencies | Massive (C++, CUDA, PyTorch) | Zero (Pure Python) |

| Training Hardware | H100 GPU Clusters | Standard Consumer CPU |

| Primary Goal | General Intelligence (AGI) | Transparency & Efficiency |

The Future: Embracing the “Small Model” Revolution

Industry analysts predict that by the end of 2026, 50% of enterprise AI deployments will shift toward “Micro-LLMs.” As we move away from monolithic data centers toward distributed “AI Superfactories,” the principles found in MicroGPT—simplicity, portability, and efficiency—will become the new industry standards.

How to Get Started

- Clone the Gist: Visit Karpathy’s official MicroGPT repository to view the source.

- Experiment with Datasets: Try training it on Shakespeare, song lyrics, or your own code snippets.

- Optimize for your device: Use the 2026 NPU (Neural Processing Unit) drivers now standard in most laptops to see its true speed.

Conclusion: Small is the New Big

MicroGPT represents a fundamental shift. It proves that we don’t always need billions of parameters to solve meaningful problems. By embracing a “minimalist” mindset, developers are reclaiming control over the AI stack, proving that the most revolutionary tech isn’t always the biggest—sometimes, it’s the one you can finally understand.

Are you ready to build your own AI from scratch? Join the movement toward transparent, efficient tech today.