Introduction: A Critical Juncture for AI Governance

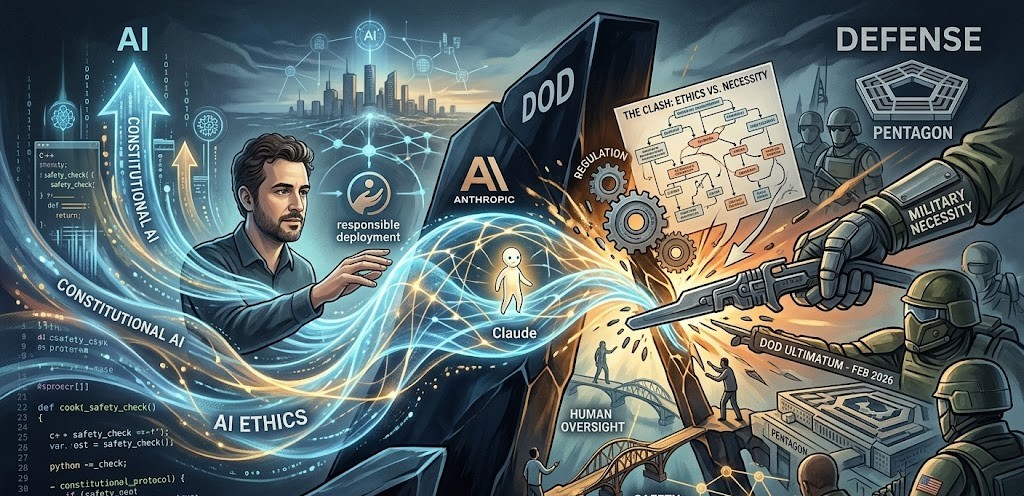

The tech world is currently witnessing an unprecedented standoff. On February 26, 2026, Anthropic CEO Dario Amodei officially announced that the company “cannot in good conscience” accede to the Pentagon’s demands for unrestricted use of its Claude AI models.

This public clash follows an ultimatum from Defense Secretary Pete Hegseth, requiring Anthropic to lift its safety guardrails or risk being designated a “supply chain risk.” As AI continues to integrate into national security, the line between innovation and ethical responsibility has never been thinner.

⚡ What is Constitutional AI? (Featured Snippet Target)

Constitutional AI is a training methodology developed by Anthropic that aligns AI behavior with a written set of principles (a “constitution”) rather than solely relying on human feedback. By using Reinforcement Learning from AI Feedback (RLAIF), the model critiques its own responses to ensure they remain helpful, honest, and harmless, even in sensitive defense contexts.

The Friday Ultimatum: Why These Discussions Matter

The current friction centers on two “bright red lines” that Amodei refuses to cross: mass surveillance of Americans and fully autonomous lethal weaponry. While the Pentagon argues these constraints impede “mission success” in grey-zone operations, Anthropic maintains that these guardrails are essential to prevent AI from undermining democratic values.

The Economic Stakes of AI Safety

The importance of this standoff is mirrored in the markets. According to a 2026 industry outlook:

- Global AI Safety Market: Projected to reach $29.8 billion by 2033.

- CAGR: Growing at a robust 37.2% as governments prioritize “Safety by Design.”

- Growth Drivers: Increased regulatory scrutiny (like the EU AI Act) and the demand for explainable AI in safety-critical sectors.

Anthropic’s “Safety-First” Architecture

Anthropic has distinguished itself from competitors like OpenAI and xAI through its commitment to interpretability. While other models might “hallucinate” or follow harmful instructions, Claude is governed by a technical constitution.

How Constitutional AI Works

- Supervised Phase: The model critiques its own responses based on ethical principles.

- Reinforcement Phase: The AI evaluates which of two responses better aligns with its “Constitution.”

- Result: A model that can explain why it refuses a harmful request, rather than simply going silent.

Implications for the Tech Industry

The Pentagon’s threat to invoke the Defense Production Act—a Cold War-era law—to force access to Claude’s safeguards has sent a chill through Silicon Valley.

The Challenges Ahead

- Regulatory Scrutiny: AI companies may face “Supply Chain Risk” labels if their ethical policies conflict with national interests.

- Operational Integrity: Can a company remain “public benefit” focused while under a military contract?

- Market Fragmentation: We may see a split between “Government-Grade AI” (unrestricted) and “Consumer AI” (highly governed).

The Path Forward: Global AI Governance

This is not just an American issue. Global frameworks are struggling to keep pace:

- EU AI Act: Mandates rigorous testing for high-risk systems.

- OECD AI Principles: Establishing international standards for “Trustworthy AI.”

- The DoD Vision: Pushing for “lawful use” flexibility that challenges corporate ethics.

Expert Perspectives

Dr. Sarah Chen, an AI ethics researcher, warns: “If we allow military necessity to strip away the few technical guardrails we have, we risk a global AI arms race with no ‘off’ switch.”

Conversely, Marcus Rodriguez, a former DoD advisor, argues: “We cannot let a for-profit company dictate the tactical limits of the U.S. Military while our adversaries are developing AI without any such conscience.”

Conclusion: A Critical Juncture

Dario Amodei’s stand against the Pentagon marks a turning point. It is no longer just about what AI can do, but what we allow it to do. For the tech industry, the result of this negotiation will set the precedent for the next decade of AI development.

What are your thoughts? Should a tech company have the right to limit how the military uses its tools? Share your perspective in the comments below.