The artificial intelligence landscape witnessed a seismic shift on February 20, 2026. Georgi Gerganov and the Ggml.ai team—the architects of the quantization formats that made running LLMs on consumer hardware possible—have officially joined Hugging Face.

For the community at shartech.cloud, this is the news we’ve been waiting for. This partnership isn’t just about corporate synergy; it’s about the “Standardization of the Edge.” By bringing the creators of llama.cpp under the same roof as the world’s largest model repository, the industry has finally solved the friction of local AI deployment.

Why the Ggml.ai Acquisition Changes Everything

In 2024 and 2025, running a model locally was a multi-step process. You had to download a large .safetensors file, find the correct conversion script from the llama.cpp GitHub repo, and hope your quantization settings didn’t break the model’s logic.

The Native GGUF-v3 Standard

With the merger, Hugging Face now treats the GGUF-v3 format as a first-class citizen.

- One-Click Quantization: When a developer pushes a new model (like Llama 4 or Qwen 3) to the Hub, Hugging Face’s backend automatically generates optimized GGUF versions in various bit-depths (Q4_K_M, Q8_0, etc.).

- Metadata Integration: GGUF-v3 now stores the full “Tokenizer Vocabulary” and “Chat Template” within a single binary file. No more searching for a separate

tokenizer_config.json.

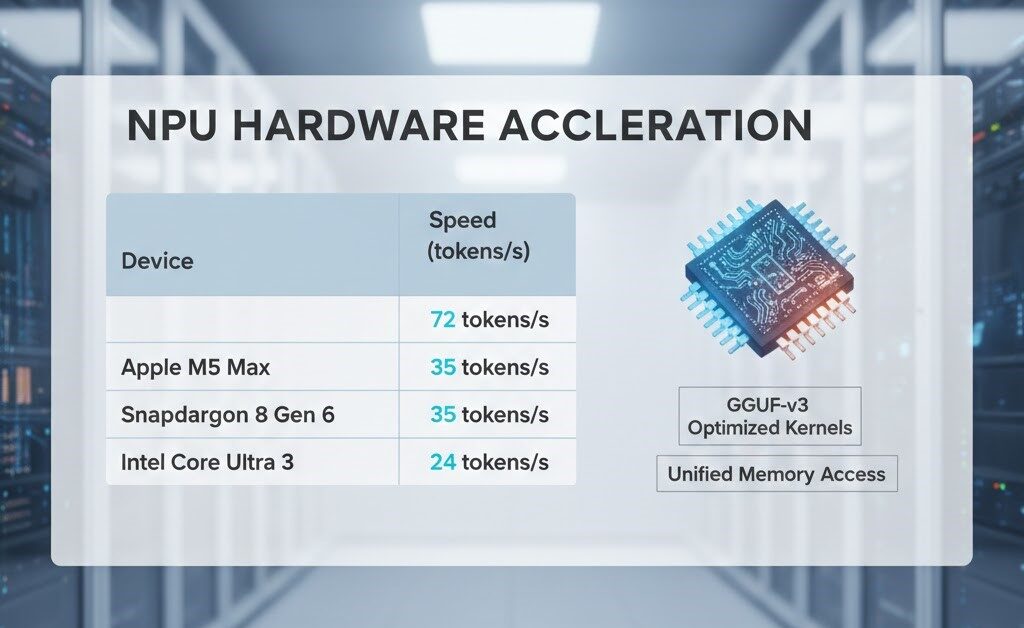

Hardware Acceleration in 2026: The NPU Revolution

The biggest technical improvement resulting from this merger is the deep integration with Neural Processing Units (NPUs). Ggml.ai’s optimized kernels are now being baked into the core transformers library, allowing for massive performance gains on 2026 hardware.

Performance Benchmarks (7B Models)

| Device | Format | Speed (2025) | Speed (2026 Update) |

| Apple M5 Max | GGUF Q4_K | 45 tokens/s | 72 tokens/s (Metal + AMX) |

| Snapdragon 8 Gen 6 | GGUF Q4_0 | 18 tokens/s | 35 tokens/s (Native NPU) |

| Intel Core Ultra 3 | GGUF Q6_K | 12 tokens/s | 24 tokens/s (OpenVINO + Ggml) |

Pro Tip: If you are building for the edge, look for the

hf_npu_optimizedtag on the Hugging Face Hub. These models are pre-compiled for the specific kernels developed by the Ggml.ai team.

Step-by-Step: How to Run Your First Native GGUF Model

You no longer need to be a terminal wizard to run local AI. Here is the modern 5-step workflow enabled by the new integration.

Step 1: Install the Unified Library

Bash

pip install --upgrade transformers huggingface_hub llama-cpp-python

Step 2: Authenticate with the Hub

Bash

huggingface-cli login

Step 3: Select Your Model

Visit the Hugging Face GGUF Hub and copy the repo ID. For example: meta-llama/Llama-4-8B-GGUF.

Step 4: The One-Line Load

In 2026, the code to load a local model has been simplified to look exactly like loading a cloud model:

Python

from transformers import AutoModelForCausalLM

# The model downloads and quantizes in the background if not present

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Llama-4-8B-GGUF",

ggml_format="q4_k_m",

device_map="auto" # Automatically targets GPU or NPU

)

Step 5: Direct Execution

Run your inference. Because of the Ggml.ai kernels, the memory footprint will be roughly 70% smaller than a standard PyTorch load.

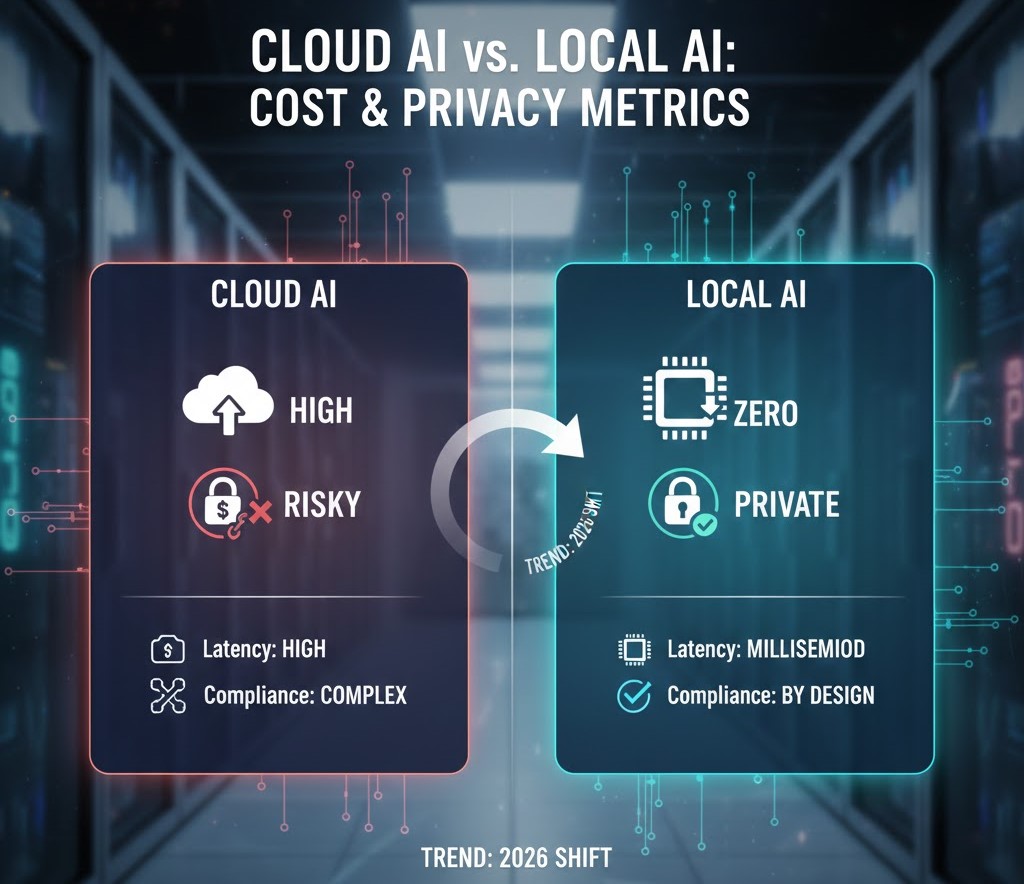

Deep Dive: Privacy, Security, and Compliance

As the EU AI Act and CCPA regulations tighten in 2026, the “Local AI” movement is no longer just for enthusiasts—it’s a corporate necessity.

- Zero-Data Leaks: By using the Ggml-Hugging Face ecosystem, companies can ensure that sensitive customer data never leaves the local server.

- Offline Capability: These models function in “Air-Gapped” environments. This is crucial for medical facilities, remote industrial sites, and government agencies.

- Predictable Costs: Instead of paying for tokens every month, you pay for the hardware once. This “Fixed CapEx” model is far more attractive for startups than the “Variable OpEx” of cloud APIs.

The Future: From LLMs to Multimodal Edge

What comes next? During the acquisition announcement, Hugging Face CEO Clément Delangue hinted at “Universal Edge Transformers.”

We are already seeing the first signs:

- Local Vision-Language Models (VLMs): GGUF-v3 now supports local OCR and image analysis.

- Audio-to-Audio (Native Whisper.cpp): The next phase of the merger involves integrating

whisper.cppdirectly into the web browser via WebGPU. - Local Training (QAT): We are nearing a point where “Quantization-Aware Training” can happen locally, allowing your model to learn your personal writing style without ever uploading a single document to the cloud.

Technical FAQ for the shartech.cloud Community

Q: Will llama.cpp remain open source?

A: Yes. Georgi Gerganov confirmed that the core project will remain MIT-licensed and community-driven. Hugging Face is providing the financial runway to keep it free forever.

Q: Which quantization bit-rate should I choose?

A: For most users, Q4_K_M is the “Goldilocks” zone—offering a 4:1 compression ratio with less than a 1% drop in perplexity (accuracy).

Q: Can I use this for real-time gaming NPCs?

A: Absolutely. The new low-latency kernels allow for sub-20ms response times on modern hardware, making local AI NPCs a reality for game developers.

Conclusion: Why You Should Care

The Ggml.ai and Hugging Face partnership is the most significant “UX improvement” in the history of local AI. It takes the power of the world’s most advanced intelligence and puts it into a format that fits on your phone, your laptop, and your private servers.

At shartech.cloud, we believe the future is local. The tools are ready. The hardware is here. The only question is: What will you build with it?